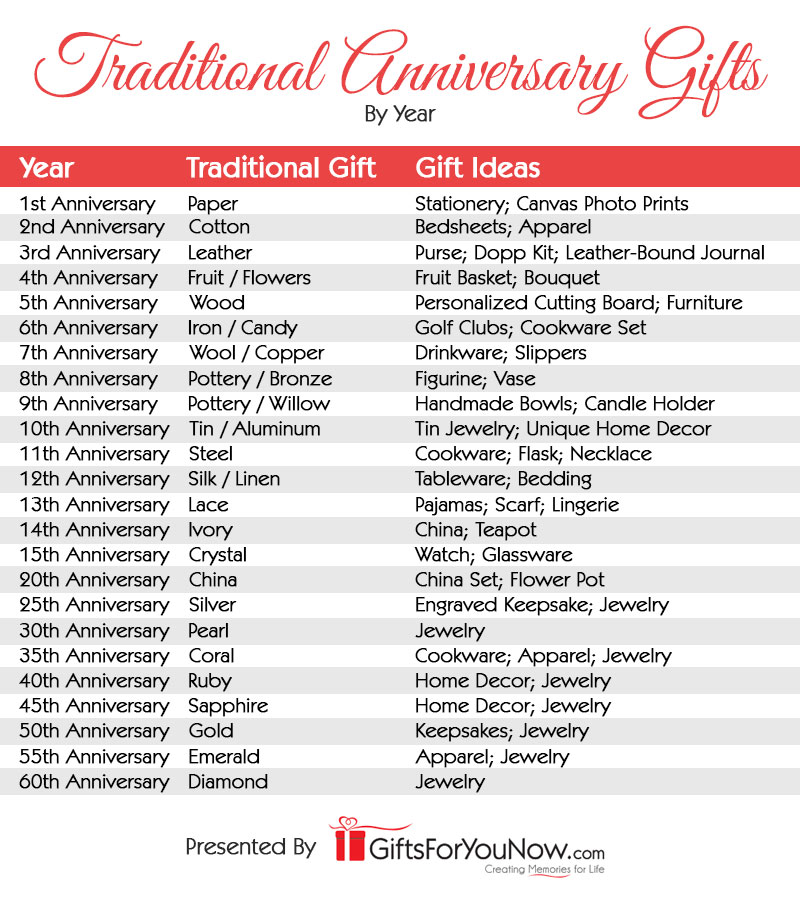

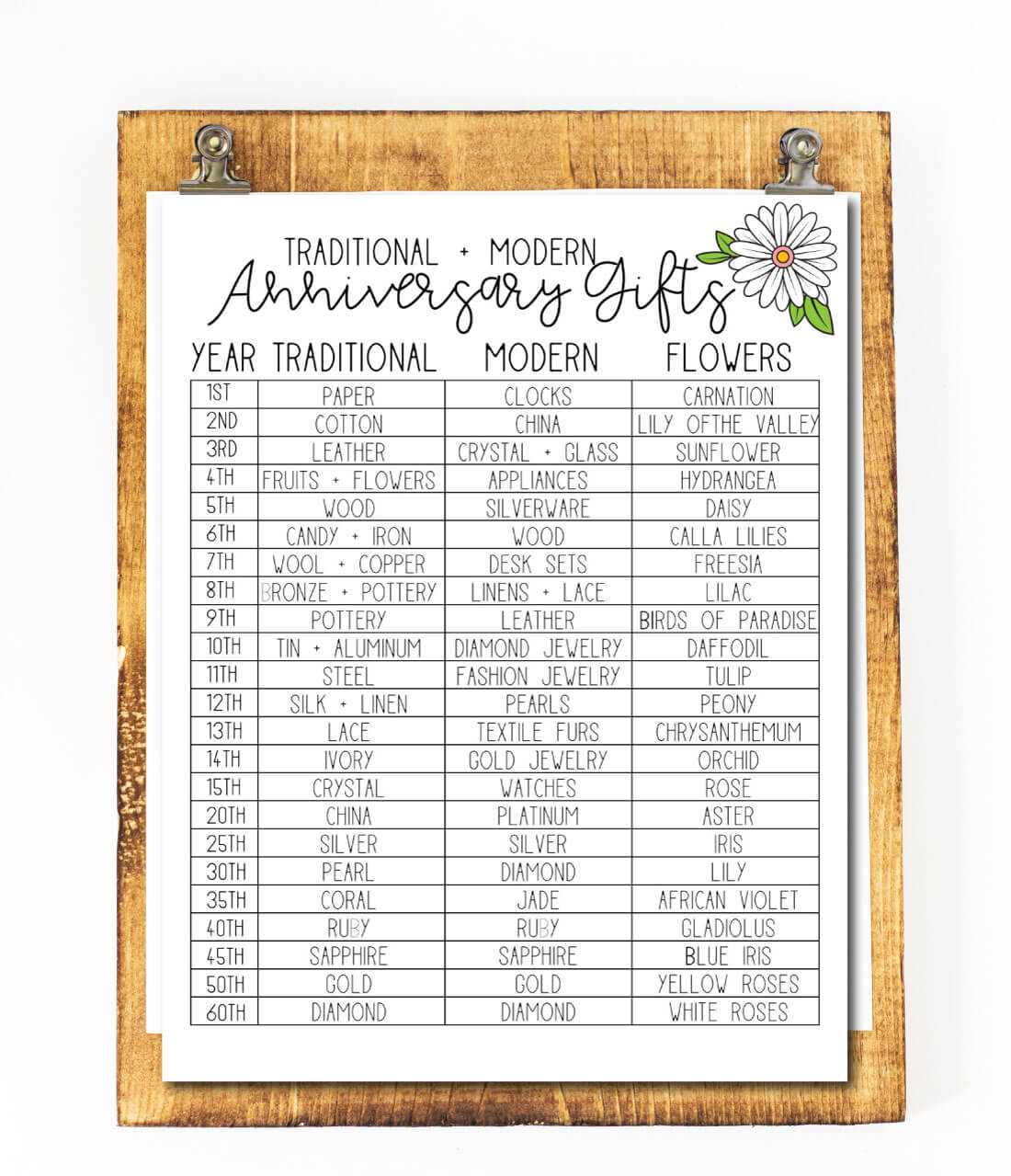

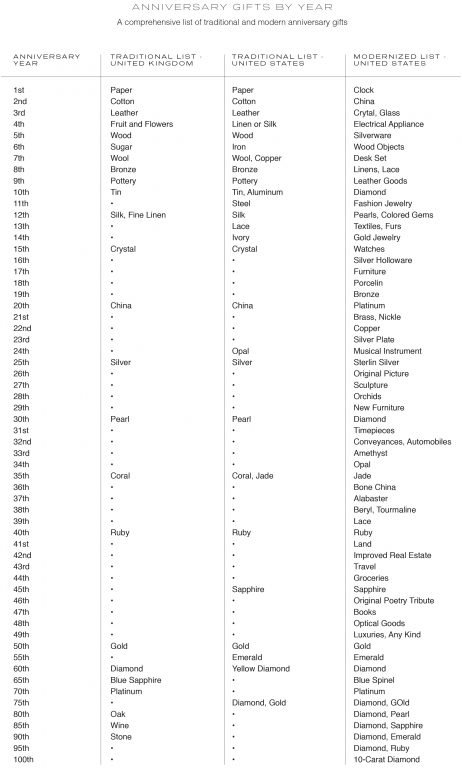

Anniversary Gifts by Year From 1st to 60th | Wedding anniversary gift list, Traditional anniversary gifts, Year anniversary gifts

Amazon.com: 10th Anniversary Aluminum Gifts for Her/Him, 10 Year Wedding Anniversary for Wife Couple Parents, 4" Ring Holder Dish Jewelry Tray - Personalized Tin Ten Years Anniversary Decorations Ideas Gift : Clothing,

Wedding Anniversary Gifts by Year: The Ultimate Guide | Year anniversary gifts, Anniversary gifts, Birthday gifts for husband