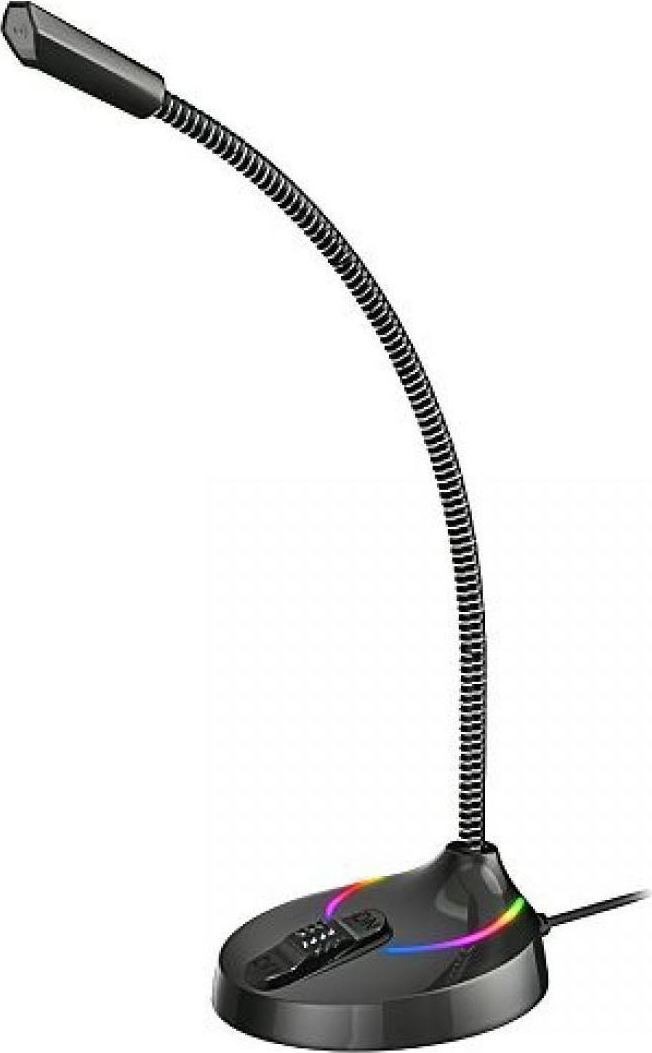

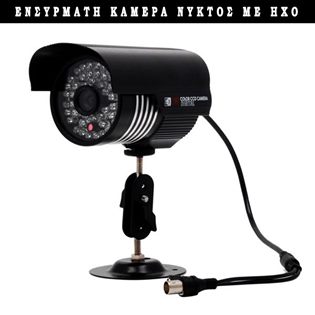

Κάμερα ασφαλείας ccd αδιάβροχη με πολύ καλό μικρόφωνο - Βάση και μετασχηματιστή < Ενσύρματες κάμερες ασφαλείας | Clevermarket

Χέρι Κρατώντας Μικρόφωνο Και Δείχνει Τους Αντίχειρες Επάνω Μπροστά Από — Φωτογραφία Αρχείου © terovesalainen #208081862