Ζωή Λάσκαρη: Τα κινηματογραφικά της δεκάρια και τα δύο μηδενικά, όπως η ίδια τα βαθμολόγησε - ertnews.gr

Ζωή Λάσκαρη: Τα κινηματογραφικά της δεκάρια και τα δύο μηδενικά, όπως η ίδια τα βαθμολόγησε - ertnews.gr

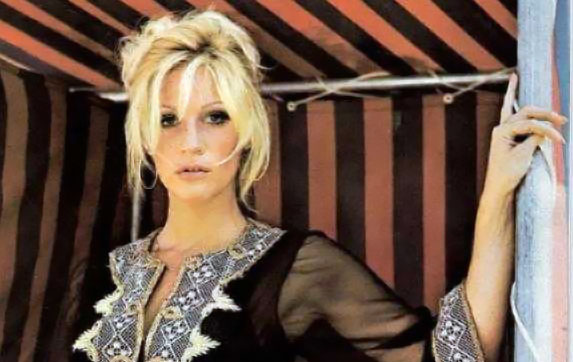

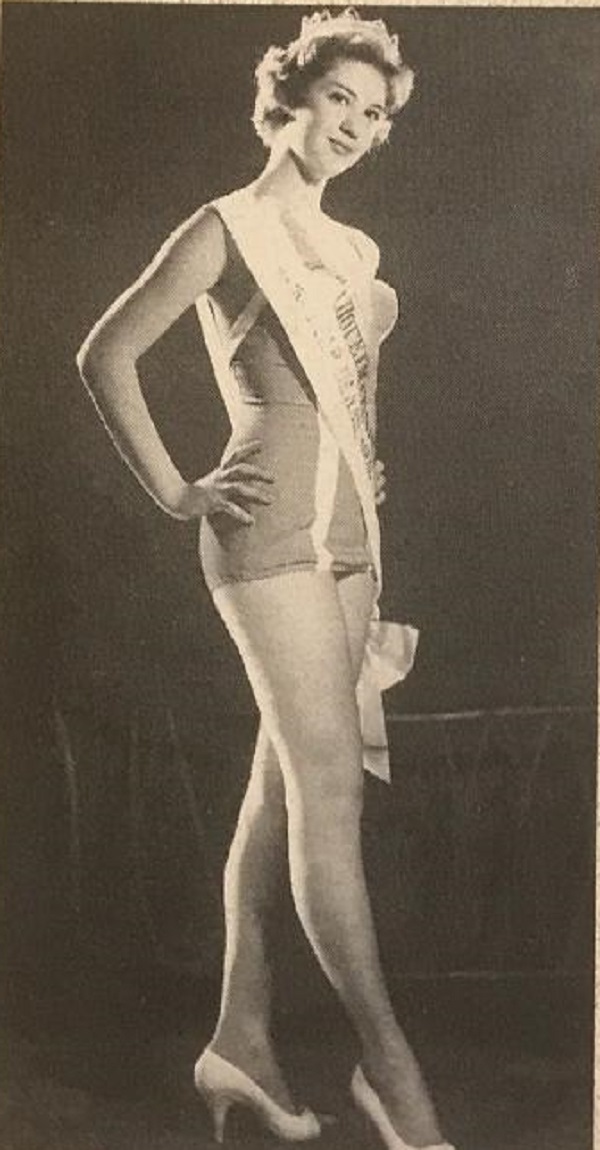

![Ζωή Λάσκαρη: 8 ταινίες σταθμός- Από το δράμα, στο μιούζικαλ και την κωμωδία [εικόνες & βίντεο] - iefimerida.gr Ζωή Λάσκαρη: 8 ταινίες σταθμός- Από το δράμα, στο μιούζικαλ και την κωμωδία [εικόνες & βίντεο] - iefimerida.gr](https://www.iefimerida.gr/sites/default/files/styles/in_article/public/2017-08/stefania_0.jpg?itok=mXDpHD39)

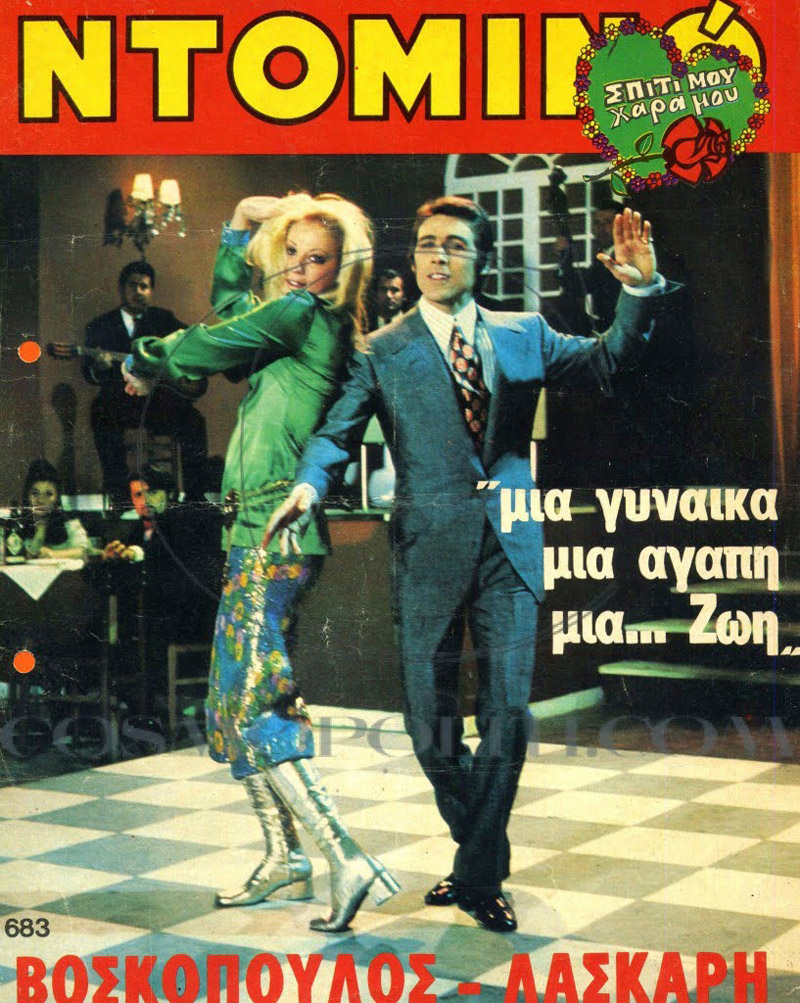

Ζωή Λάσκαρη: 8 ταινίες σταθμός- Από το δράμα, στο μιούζικαλ και την κωμωδία [εικόνες & βίντεο] - iefimerida.gr

Χωρίς Ταυτότητα (1963) Ζωή Λάσκαρη Αλέκος Αλεξανδράκης 🎬 Κλασικές Ελληνικές Ταινίες Greek Movies - YouTube

Ζωή Λάσκαρη: Τα κινηματογραφικά της δεκάρια και τα δύο μηδενικά, όπως η ίδια τα βαθμολόγησε - ertnews.gr

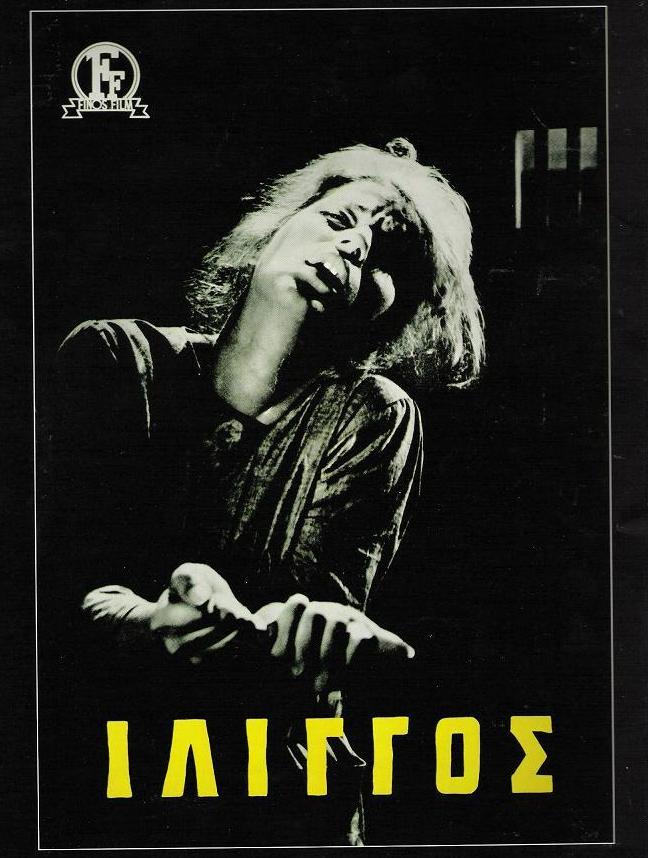

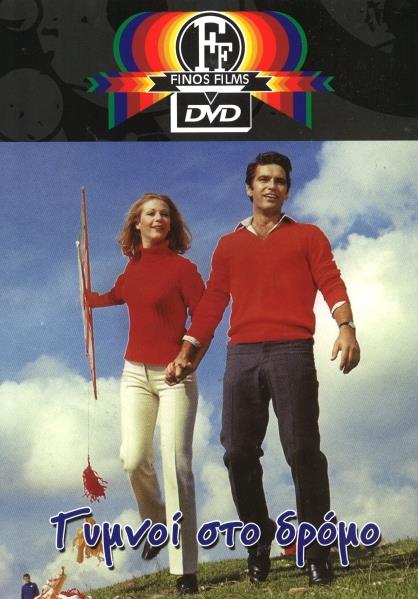

Ζωή Λάσκαρη: Οι 22 ταινίες της Φίνος Φιλμ και το ρεκόρ της στους πρωταγωνιστικούς γυναικείους ρόλους | Gossip-tv.gr