ΓΕΩΡΓΑΚΟΠΟΥΛΟΣ Γ.Β. & ΣΙΑ Ο.Ε. | Ανταλλακτικά Φανοποιίας – Μεταχειρισμένα - Τα Πάντα Όλα, Ανταλλακτικά Αυτοκινήτων | Σίνδος ΘΕΣΣΑΛΟΝΙΚΗΣ | vrisko.gr

ΓΕΩΡΓΑΚΟΠΟΥΛΟΣ Γ.Β. Α.Ε. | Ανταλλακτικά Φανοποιίας – Μεταχειρισμένα - Τα Πάντα Όλα, Ανταλλακτικά Αυτοκινήτων | Αθήνα ΑΤΤΙΚΗΣ | vrisko.gr

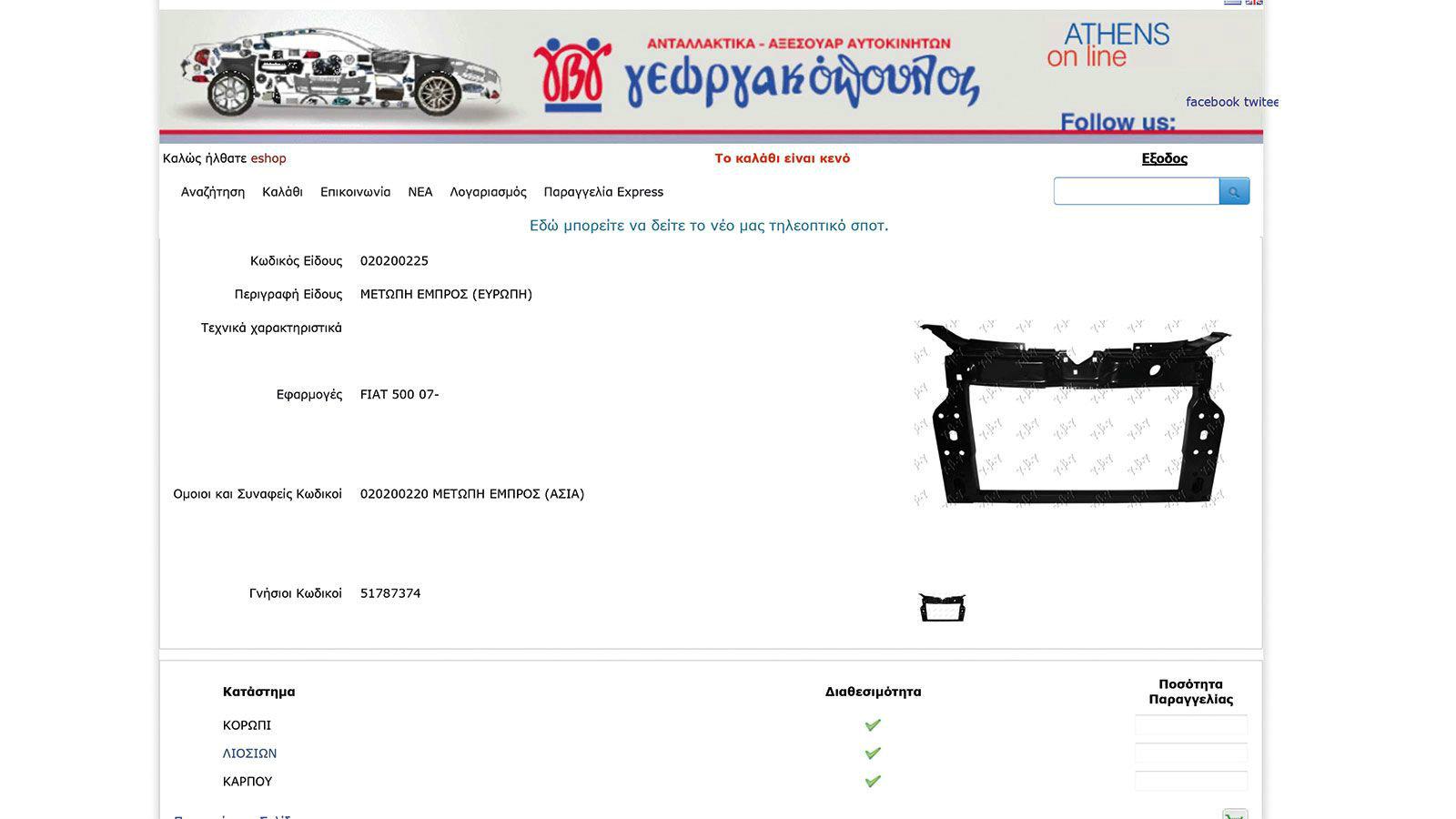

043805812 - 6Y6945095 ΦΑΝΑΡΙ ΠΙΣΩ ΑΡΙΣΤΕΡΑ - ΟΔΗΓΟΥ FABIA 6Υ6945111 IM Μεταχειρισμένα - Ανταλλακτικά Αυτοκινήτων Ερωτόκριτος | VW SEAT SKODA AUDI

ΓΕΩΡΓΑΚΟΠΟΥΛΟΣ Γ.Β. Α.Ε. | Ανταλλακτικά Φανοποιίας – Μεταχειρισμένα - Τα Πάντα Όλα, Ανταλλακτικά Αυτοκινήτων | Αθήνα ΑΤΤΙΚΗΣ | vrisko.gr

Γ.Β. Γεωργακόπουλος ΑΕ - Αυθεντική corporate φωτογράφιση & Video με εκπληκτικό τελικό οπτικό αποτέλεσμα - Bee-360°

Γ.Β. Γεωργακόπουλος ΑΕ - Αυθεντική corporate φωτογράφιση & Video με εκπληκτικό τελικό οπτικό αποτέλεσμα - Bee-360°

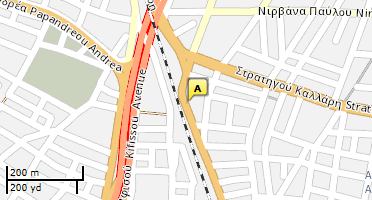

Γεωργακοπουλοσ, γ. β., Α.ε. - διεύθυνση, 🛒 αξιολογήσεις πελατών, ώρες λειτουργίας και αριθμός τηλεφώνου - Καταστήματα στην πόλη Αθήνα - Nicelocal.gr

H Γ.Β. Γεωργακόπουλος επέλεξε το Soft1 Cloud ERP Series 5 | Ρεπορτάζ και ειδήσεις για την Οικονομία, τις Επιχειρήσεις, το Χρηματιστήριο, την Πολιτική